In the economy of user effort, be a bargain, not a scam

Treat user effort as a currency. To create a product users love, design the tradeoff curve of use case complexity to user effort with the same care you design your pricing scheme.

Treat user effort as a currency. To create a product users love, design the tradeoff curve of use case complexity to user effort with the same care you design your pricing scheme.

Many teams start with the MVP. But what if the key to shipping great products wasn’t starting small — but starting big? Could great products start at the finish line?

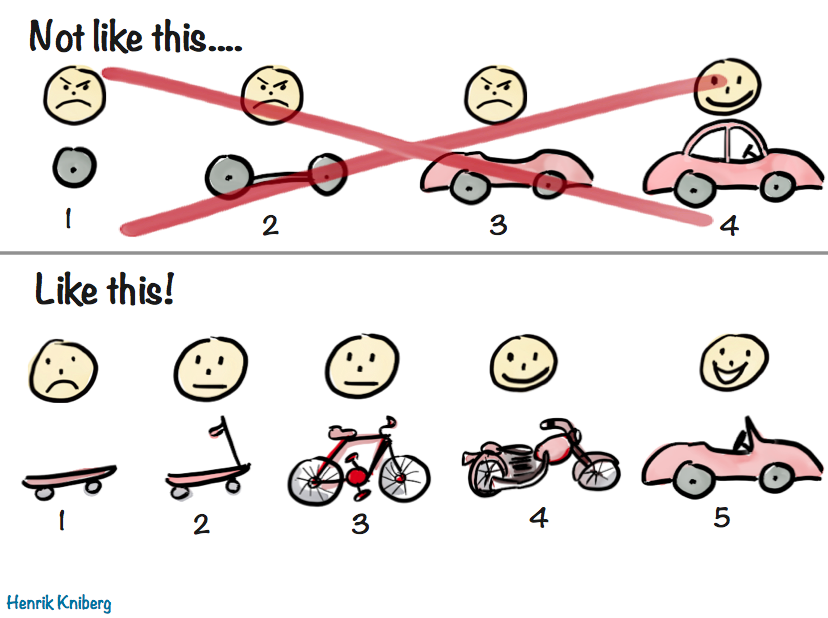

You may be familiar with this wonderful illustration and accompanying blog post by Henrik Kniberg about good MVPs:

It’s a very visual way to illustrate the age-old concept that that a good MVP is not the one developed in isolation over months or years, grounded on assumptions about user needs and goals, but one that delivers value to users as early as possible, so that future iterations can take advantage of the lessons learned from real users.

I love Henrik’s metaphor so much, I have been using a similar system to flesh out product requirements and shipping goals, especially early on. It can be immediately understood by anyone who has seen Henrik’s illustration, and I find it can be a lot more pragmatic and flexible than the usual simple two tiered system (core requirements and stretch goals). Additionally, I find this fits nicely into a fixed time, variable scope development process, such as Shape Up.

Please note that the concept of a North Star UI has no relation to the North Star Metric. While both serve as a guiding light for product decisions, and both are important, the North Star UI guides you in designing the product, whereas the North Star Metric is about evaluating success. To avoid confusion, I’ll refer to it as “North Star UI”, although it’s not about the UI per se, but the product vision on a deeper level.

The first three stages are much more concrete and pragmatic, as they directly affect what is being worked on. The more we go down the list, the less fleshed out specs are, as they need to allow room for customer input. This also allows us to outline future vision, without having to invest in it prematurely.

The most controversial of these is the last one: the hovercar, i.e. the North Star UI. It is the very antithesis of the MVP. The MVP describes what we can ship ASAP, whereas the North Star describes the most idealized goal, one we may never be able to ship.

It is easy to dismiss that as a waste of time, a purely academic exercise. “We’re all about shipping. Why would we spend time on something that may not even be feasible?” I hear you cry in Agile.

Stay with me for a moment, and please try to keep an open mind. Paradoxical as it may sound, fleshing out your North Star can actually save you time. How? Start counting.

At its core, this framework is about breaking down tough product design problems into three more manageable components:

One way to frame it is is that 2 & 3 are the product version of tech debt.[1]Unlike Devographics, surveys are not FA’s core business, so the Impact/Effort tradeoff simply wasn’t there for a custom UI, at least at this point in time. I ended up going with Tally, mainly due to the flexibility of its conditional logic and its support for code injection (which among other things, allowed me to use FA icons — a whopping 120 different ones!).

It’s important to understand what constraints are fair game to ignore for 1 and which are not. I often call these ephemeral or situational constraints. They are constraints that are not fundamental to the product problem at hand, but relate to the environment in which the product is being built and could be lifted or change over time. Things like:

Unlike ephemeral constraints, certain requirements are part of the problem description and cannot be ignored. Some examples from the case studies below:

While these may be addressed differently in different solutions, it would be an oxymoron to have a North Star that did not take them into account.

Nearly every domain of human endeavor has a version of divide and conquer: instead of solving a complex problem all at once, break it down into smaller, manageable components and solve them separately. Product design is no different.

This process really shines when you’re dealing with the kinds of tough product problems where at least two of these questions are hard, so breaking it down can do wonders for reducing complexity.

By solving these components separately, our product design process becomes can more easily adapt to changes.

I have often seen “unimplementable” solutions become implementable down the line, due to changes in internal or external factors, or simply because someone had a lightbulb moment.

By addressing these components separately, when constraints get lifted all we need to reevaluate is our compromises. But without this modularization, our only solution is to go back to the drawing board. Unsurprisingly, companies often choose to simply miss out on the opportunity, because it’s cheaper (or seems cheaper) to do so.

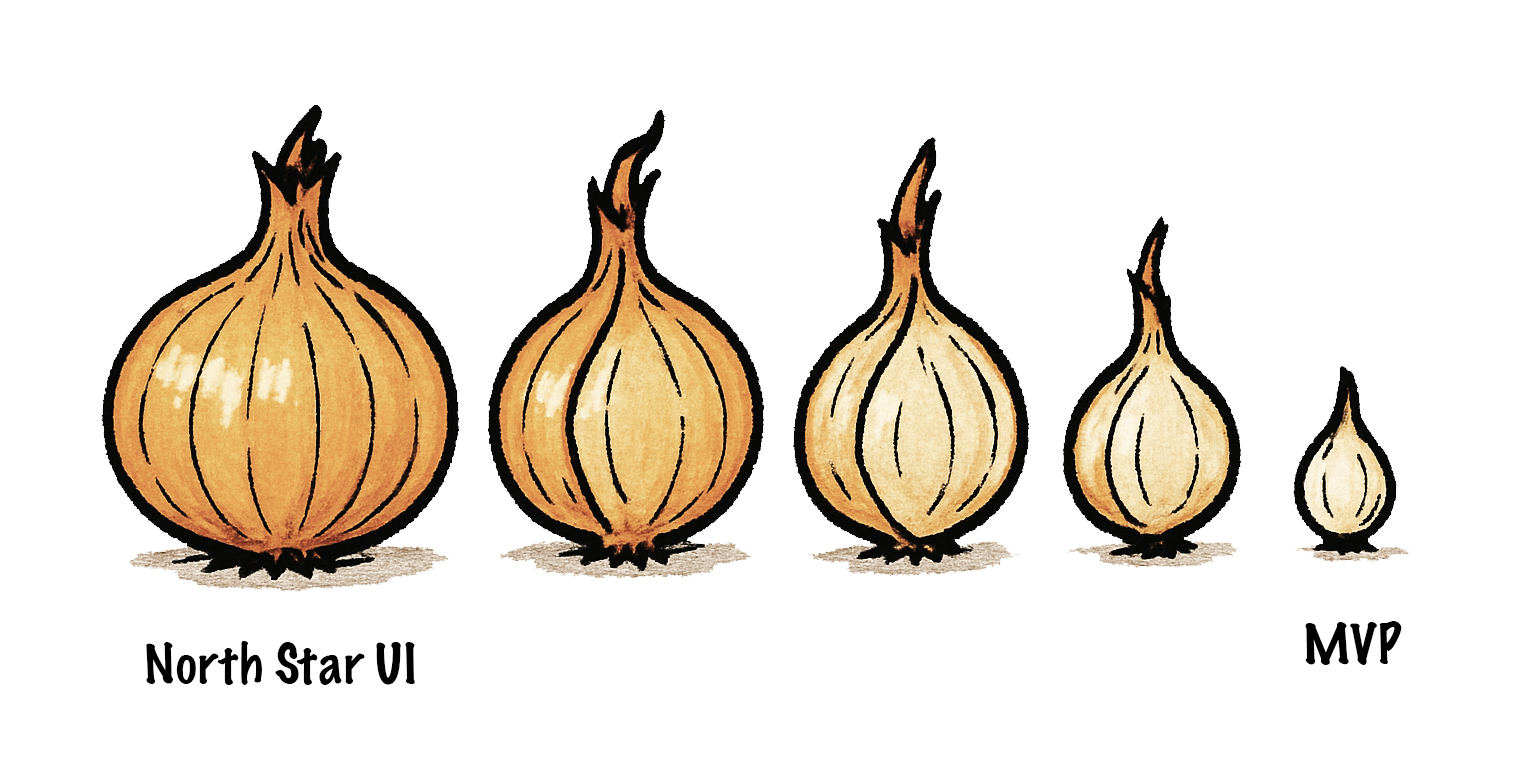

Whether you realize it or not, every shipping goal is always derived from the North Star, like peeling layers off an onion. In some contexts the process of breaking down a bigger shipping goal into milestones that can ship independently is even called layering.

The process is so ingrained, so automatic, that most product designers don’t realize they are doing it. They go from hovercar to car so quickly they barely realize the hovercar was there to begin with. Thinking about the North Star is taboo — who has time for daydreaming? We must ship, yesterday!

But the hovercar is fundamental. Without it, there is no skateboard — you can’t reduce the unknown. When designing it is not an explicit part of the process, the result is that the main driver of all product design decisions is something that can never be explicitly discussed and debated like any other design decision. In what universe is that efficient?

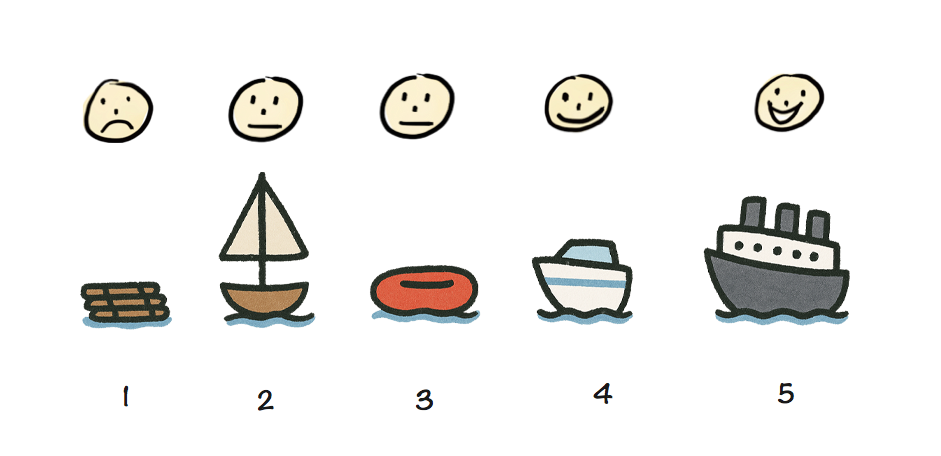

A skateboard might be a good MVP if your ultimate vision is a hovercar, but it would be a terrible minimum viable cruise ship — you might want to try a wooden raft for that.

A skateboard may be a great MVP for a car, but a terrible MVP for a cruise ship.

Making the North Star taboo doesn’t make it disappear (when did that ever work?).

It just means that everyone is following a different version of it. And since MVPs are products of the North Star, this will manifest as difficulty reaching consensus at every step of the way.

The product team will disagree on whether to ship a skateboard or a wooden raft, then on whether to build a scooter or a simple sailboat, then on whether to work on a speedboat or a yacht, and so on. It will seem like there is so much disconnect that every decision is hard, but there is actually only one root disconnect that manifests as multiple because it is never addressed head on.

When the North Star is not clearly articulated, everyone has their own.

Here is a story that will sound familiar to many readers:

A product team is trying to design a feature to address a specific user pain point. Alice has designed an elegant solution that addresses not just the problem at hand, but several prevalent longstanding user pain points at once — an eigensolution. She is aware it would be a little trickier to implement than other potential solutions, but the increase in implementation effort is very modest, and easily offset by the tremendous improvement in user experience. She has even outlined a staged deployment strategy that allows it to ship incrementally, adding value and getting customer feedback earlier.

Excited, she presents her idea to the product team, only to hear engineering manager Bob dismiss it with “this is scope creep and way too much work, it’s not worth doing”. However, what Bob is actually thinking is “this is a bad idea; any amount of work towards it is a waste”. The design session is now derailed; instead of debating Alice’s idea on its merits, the discussion has shifted towards costing and/or reducing effort. But this is a dead end because the amount of work was never the real problem. In the end, Alice wants to be seen as a team player, so she backs off and concedes to Bob’s “simpler” idea, despite her worries that it is overfit to the very specific use case being discussed, and the product is now worse.

Arguing over effort feels safer and less confrontational than debating vision — but is often a proxy war. Additionally, it is not productive. If the idea is poor, effort is irrelevant. And once we know an idea is good and believe it to our core, we have more incentive to figure out implementation, which often proves to be easier than expected once properly investigated. Explicitly fleshing out the Hovercar strips away the noise and brings clarity.

When we answer the questions above in order and reach consensus on the North Star before moving on to the compromises, we know what is an actual design decision and what is a compromise driven by practical constraints. Articulating these separately, allows us to discuss them separately. It is very hard to evaluate tradeoffs collaboratively if you are not on the same page about what we are trading off and how much it’s worth. You need both the cost and the benefit to do a cost-benefit analysis!

Additionally, fleshing the North Star out separately ensures that everyone is on the same page about what is being discussed. All too often have I seen early design sessions where one person is discussing the skateboard, another the bicycle, and a third one the hovercar, no-one realizing that the reason they can’t reach consensus is that they are designing different things.

Conventional wisdom is that we strip down the North Star to an MVP, ship that, then iterate based on user input. With that process, our actual vision never really gets evaluated and by the time we get to it, it has already changed tremendously.

But did you know you can actually get input from real users without writing a single line of code?

Believe it or not, you don’t need to wait until a UI is prototyped to user test it. You can even user test a low-fi paper prototype or even a wireframe. This is widely known in usability circles, yet somehow entirely unheard of outside the field. The user tells you where they would click or tap on every step, and you mock the UI’s response by physically manipulating the prototype or showing them a wireframe of the next stage.

Obviously, this works better for some types of products than others. It is notably hard to mock rich interactions or UIs with too many possible responses. But when it does work, its Impact/Effort ratio is very high; you get to see whether your core vision is on the right track, and adjust your MVP accordingly.

It can be especially useful when there are different perspectives within a team about what the North Star might be, or when the problem is so novel that every potential solution is low-confidence. No-one’s product intuition is always right, and there is no point in evaluating compromises if it turns out that even the “perfect” solution was not actually all that great.

So far, we have discussed the merits of designing our North Star, assuming we will never be able to ship it. However, in many cases, simply articulating what the North Star is can bring it within reach. It’s not magic, just human psychology.

Once we have a North Star, we can use it to evaluate proposed solutions: How do they relate to it? Are they a milestone along a path that ends at the North Star? Do they actively prevent us from ever getting there? Prioritizing solutions that get us closer to the North Star can be a powerful momentum building tool.

Humans find it a lot easier to make one more step along a path they are already on, than to make the first step on an entirely new path. This is well-established in psychology and often used as a technique for managing depression or executive dysfunction. However, it applies on anything that involves humans — and that includes product design.

Once we’re partway there, it naturally begs the question: can we get closer? How much closer? Even if we can’t get all the way there, maybe we can close enough that the remaining distance won’t matter. And often, the closer you get, the more achievable the finish line gets.

In fact, sometimes simply reframing the North Star as a sequence of milestones rather than a binary goal can be all that is needed to make it feasible. For an example of this, check out the CSS Nesting case study below.

In my 20 years of product design, I have seen ephemeral constraints melt away so many times I have learned to interpret “unimplementable” as “kinda hard; right now”. Two examples from my own experience that I find particularly relevant below, one around Survey UI, and one around a CSS language feature.

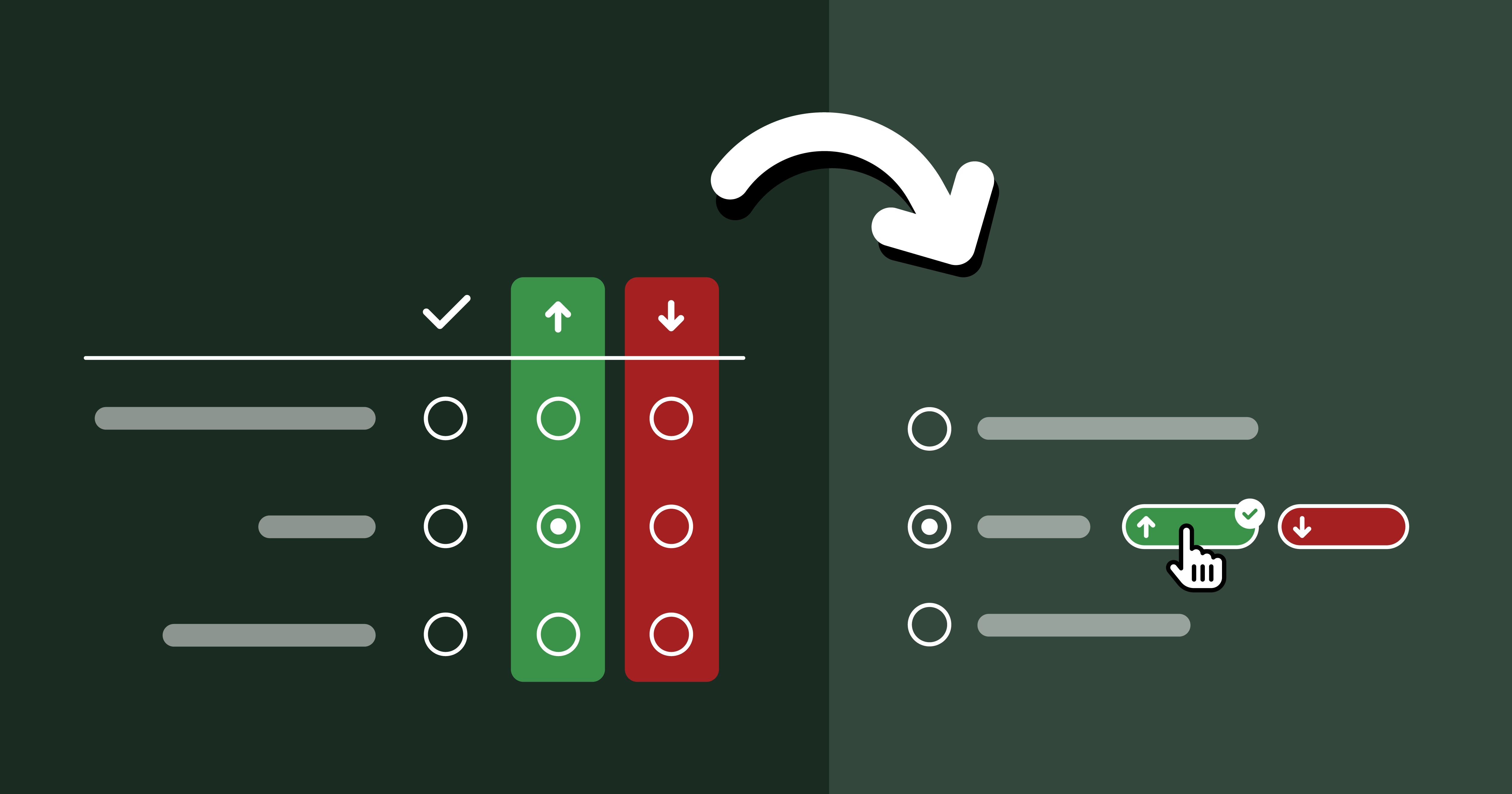

The case study is described at length in Context Chips in Survey Design: “Okay, but how does it feel?”. In a nutshell, the relevant bits are:

Here is a dirty little secret about software engineering (and possibly any creative pursuit): neither feasibility nor effort are fixed for a given task. Engineers are not automatons that will implement everything with the same energy and enthusiasm. They may implement product vision they disagree with, but you will be getting very poor ROI out of their time.

Investing the time and energy to get engineers excited can really pay dividends. When good engineers are excited, they become miracle workers.

In fact, engineering momentum is often, all that is needed to make the infeasible, feasible. It may seem hard to fit this into the crunch of OKRs and KPIs but it’s worth it; the difference is not small, it is orders of magnitude. Things that were impossible or insurmountable become feasible, and things that would normally take weeks or months get done in days.

One way to build engineering momentum is to demonstrate the value and utility of what is being built. All too often, product decisions are made in a vacuum, based on gut feelings and assumptions about user needs. Backing them up with data, such as usability testing sessions is an excellent way to demonstrate (and test!) their basis. When possible, having engineers observe user testing sessions firsthand can be much more powerful than secondhand reports.

Sometimes high effort things just take a lot of hard work and there is no way around it. Other times, feasibility is just one good idea away.

One of my favorite examples, and something I’m proud to have helped drive is the relaxed CSS Nesting syntax, now shipped in every browser. It is such an amazing case study on the importance of having an explicit and consensus-backed North Star UI [2]I even did an entire talk about it at Web Unleashed, with a lot more technical detail than what I have included here. .

In a nutshell, CSS nesting was a (then new) CSS syntax that let developers better organize their code through reducing repetition.

table.browser-support {

border-collapse: collapse;

}

table.browser-support th,

table.browser-support td {

border: 1px solid silver;

}

@media (width < 600px) {

table.browser-support,

table.browser-support tr,

table.browser-support th,

table.browser-support td {

display: block;

}

}

table.browser-support th {

border: 0;

}

table.browser-support td {

background: yellowgreen;

}

table.browser-support td:empty {

background: red;

}

table.browser-support td > a {

color: inherit;

}

table.browser-support {

border-collapse: collapse;

@media (width < 600px) {

&, tr, th, td {

display: block;

}

}

th, td {

border: 1px solid silver;

}

th {

border: 0;

}

td {

background: yellowgreen;

&:empty {

background: red;

}

> a {

color: inherit;

}

}

}

This is one of the rare cases where the North Star was well known in advance, since the syntax was already well established in developer tooling (CSS preprocessors). Instead, the big challenge was navigating the practical constraints, since CSS implemented in browsers has different performance characteristics, so a syntax that is feasible for tooling may be out of reach for a browser. In this case, the North Star syntax had been ruled out by browser engineers due to prohibitive parsing performance [3]for any Compilers geeks out there that want all the deets: it required potentially unbounded lookahead since there is no fixed number of tokens a parser can read and be able to tell the difference between a selector and a declaration. , so we had to design a different, more explicit syntax that could be parsed more efficiently.

At this point, it is important to note that CSS Nesting is a feature that is very heavily used once available. Conciseness and readability are paramount, especially when conciseness is the sole purpose of the feature in the first place!

Initial attempts for a syntax that satisfied these technical requirements introduced a lot of noise,

making the syntax tedious to write and noisy to read.

Even worse, these attempts were actively incompatible with the North Star syntax, as well as other parts of the language (namely, the @scope rule).

This meant that even if the North Star syntax became feasible later,

CSS would need to forever support syntax that would then have no purpose,

and would only exist as a wart from the past, just like HTML doctypes.

Once Google became very keen to ship Nesting (driven by State of CSS 2022, which showed it as the top missing CSS feature), a small subset of the CSS Working Group, led by Elika Etemad and myself met to explore alternatives, and produced four competing proposals. The one that the group voted to adopt [4]Originally dubbed “Lea’s proposal”, and later “Non-letter start proposal”, but became known as Option 3 from its position among the five options considered (including the original syntax). was the one I designed explicitly to answer the question: If the North Star syntax is out of the question right now, what is the largest subset of it that is feasible?

Once we got consensus on this intermediate syntax, I started exploring whether we could get any closer to the 🌟, even proposing an algorithm that would reduce the number of cases that required the slower parsing to essentially an edge case. A few other WG members joined me, with my co-TAG member Peter Linss being most vocal.

This is a big advantage of North Star compatible designs: it is much easier to convince people to move a little further along on the path they are already on, than to move to a completely different path. With a bit of luck, you may even find yourself implementing an “infeasible” North Star without even realizing it, one little step at a time.

We initially faced a lot of resistance from browser engineers, until eventually a brilliant Google engineer, Anders Ruud and his team experimented with variations of my proposed algorithm and actually closed in on a way to implement the North Star syntax in Chrome. The rest, as they say, is history. 🌟

Hopefully by now you’re convinced about the value of investing time in reaching alignment on an explicit North Star that has buy-in from the entire product team.

A common misconception is that the North Star is a static goal that prevents you from adapting to new data, such as customer feedback. But often, your North Star will change a lot over time, and that’s okay. Having an initial destination does not take away your ability to course correct. That’s not giving up, it’s adapting.

And yes, it’s true that many product teams do use a vision-led approach — they just start from the car, not the hovercar. While that confers some of the benefits above, there is still an implicit reduction happening, because the hovercar is still there in the back of their mind.

Note that for this framework to be beneficial, it is important that everyone is on the same page and understands the steps, benefits, and goals of this approach. Co-designing a North Star with a team that sees the process as a pointless thought experiment will only add friction and will not confer any of these benefits. Also, this is a mindset that can only work when applied top-down. If you are not a decision-maker at your place of work and leadership is not on board, you will have a very hard time if you try to push this ad hoc, without first getting leadership buy-in. You can try sending them a link to this blog post!

If this post resonated, please share your own case studies in the comments. Or, if you decide to give this framework a try, I’d love to hear how it went!

Indeed, looks like I’m not the first to draw a parallel between the two! ↩︎

I even did an entire talk about it at Web Unleashed, with a lot more technical detail than what I have included here. ↩︎

for any Compilers geeks out there that want all the deets: it required potentially unbounded lookahead since there is no fixed number of tokens a parser can read and be able to tell the difference between a selector and a declaration. ↩︎

Originally dubbed “Lea’s proposal”, and later “Non-letter start proposal”, but became known as Option 3 from its position among the five options considered (including the original syntax). ↩︎

The story of how a weird little UI to collect sentiment alongside survey responses defied constraints and triumphed over skepticism through usability testing.

One would think that we’ve more or less figured survey UI out by now. Multiple choice questions, checkbox questions, matrix questions, dropdown questions, freeform textfields, numerical scales, what more could one possibly need?!

And yet, every time Google sponsored me to lead one of the State Of … surveys, and especially the inaugural State of HTML 2023 Survey, I kept hitting the same wall; I kept feeling that the established options for answering UIs were woefully inadequate for balancing the collection good insights with minimal friction for end-users.

The State Of surveys used a completely custom survey infrastructure, so I could often (but not always) convince engineering to implement new question UIs. After joining Font Awesome, I somehow found myself leading yet another survey, despite swearing never to do this again. 🥲 Alas, building a custom survey UI was simply not an option in this case; I had to make do with the existing options out there [1]Unlike Devographics, surveys are not FA’s core business, so the Impact/Effort tradeoff simply wasn’t there for a custom UI, at least at this point in time. I ended up going with Tally, mainly due to the flexibility of its conditional logic and its support for code injection (which among other things, allowed me to use FA icons — a whopping 120 different ones!). , so I felt this kind of pain to my core once again.

So what are these cases where the existing answering UIs are inadequate, and how could better ones help? I’m hoping this case study to be Part 1 of a series around how survey UI innovations can help balance tradeoffs between user experience and data quality, though this is definitely the one I’m most proud of, as it was such a bumpy ride, but it was all worth it in the end.

Unlike Devographics, surveys are not FA’s core business, so the Impact/Effort tradeoff simply wasn’t there for a custom UI, at least at this point in time. I ended up going with Tally, mainly due to the flexibility of its conditional logic and its support for code injection (which among other things, allowed me to use FA icons — a whopping 120 different ones!). ↩︎